Weak supervision

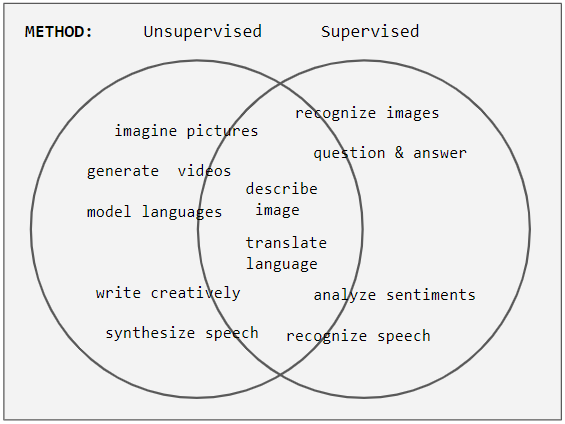

Weak supervision is a paradigm in machine learning, the relevance and notability of which increased with the advent of large language models due to large amount of data required to train them. It is characterized by using a combination of a small amount of human-labeled data (exclusively used in more expensive and time-consuming supervised learning paradigm), followed by a large amount of unlabeled data (used exclusively in unsupervised learning paradigm). In other words, the desired output values are provided only for a subset of the training data. The remaining data is unlabeled or imprecisely labeled. Intuitively, it can be seen as an exam and labeled data as sample problems that the teacher solves for the class as an aid in solving another set of problems. In the transductive setting, these unsolved problems act as exam questions. In the inductive setting, they become practice problems of the sort that will make up the exam. Technically, it could be viewed as performing clustering and then labeling the clusters with the labeled data, pushing the decision boundary away from high-density regions, or learning an underlying one-dimensional manifold where the data reside.

History[edit]

The heuristic approach of self-training (also known as self-learning or self-labeling) is historically the oldest approach to semi-supervised learning,[2] with examples of applications starting in the 1960s.[5]

The transductive learning framework was formally introduced by Vladimir Vapnik in the 1970s.[6] Interest in inductive learning using generative models also began in the 1970s. A probably approximately correct learning bound for semi-supervised learning of a Gaussian mixture was demonstrated by Ratsaby and Venkatesh in 1995.[7]

Methods[edit]

Generative models[edit]

Generative approaches to statistical learning first seek to estimate , the distribution of data points belonging to each class. The probability that a given point has label is then proportional to by Bayes' rule. Semi-supervised learning with generative models can be viewed either as an extension of supervised learning (classification plus information about ) or as an extension of unsupervised learning (clustering plus some labels).

Generative models assume that the distributions take some particular form parameterized by the vector . If these assumptions are incorrect, the unlabeled data may actually decrease the accuracy of the solution relative to what would have been obtained from labeled data alone.[8]

However, if the assumptions are correct, then the unlabeled data necessarily improves performance.[7]

The unlabeled data are distributed according to a mixture of individual-class distributions. In order to learn the mixture distribution from the unlabeled data, it must be identifiable, that is, different parameters must yield different summed distributions. Gaussian mixture distributions are identifiable and commonly used for generative models.

The parameterized joint distribution can be written as by using the chain rule. Each parameter vector is associated with a decision function .

The parameter is then chosen based on fit to both the labeled and unlabeled data, weighted by :

In human cognition[edit]

Human responses to formal semi-supervised learning problems have yielded varying conclusions about the degree of influence of the unlabeled data.[18] More natural learning problems may also be viewed as instances of semi-supervised learning. Much of human concept learning involves a small amount of direct instruction (e.g. parental labeling of objects during childhood) combined with large amounts of unlabeled experience (e.g. observation of objects without naming or counting them, or at least without feedback).

Human infants are sensitive to the structure of unlabeled natural categories such as images of dogs and cats or male and female faces.[19] Infants and children take into account not only unlabeled examples, but the sampling process from which labeled examples arise.[20][21]